Political Science Educator: volume 28, issue 2

The Teacher-Scholar

By Ozlem Tuncel (otuncelgurlek1@gsu.edu)

Generative AI tools like ChatGPT have rapidly transformed the academic landscape, prompting political science educators to reconsider their teaching strategies. The integration of these technologies raises important pedagogical questions: How can we responsibly incorporate AI into our classrooms while fostering the essential skills required for rigorous literature reviews? In the past, teaching literature reviews posed challenges due to students’ reliance on manual searches and limited access to diverse resources, often requiring significant time and effort to locate relevant materials. These hurdles left less room for focusing on higher-order skills like critical analysis and synthesis of information. While digital databases eased some of these burdens, generative AI tools like ChatGPT present a new paradigm, offering unprecedented efficiency in gathering and organizing information. This shift prompts educators to reconsider how to balance the convenience of AI with the development of core academic competencies. Although some view generative AI as a challenge to academic integrity, I believe this technology offers opportunities to enhance learning by helping students develop new competencies and refine their critical thinking. In this reflection, I draw on my experience with AI-related educational resources to share practical insights on how AI can support the teaching of literature reviews in political science courses.

Using Generative AIs for Literature Reviews

Conversations with colleagues often reveal a shared frustration: student-submitted literature reviews generated by AI often contain fabricated information and incorrect citations. While these concerns are understandable, we are at a pivotal moment in academia where generative AI should be viewed not as a threat but as a tool to “augment our thinking” (Torres and Memeroff 2024). This reflection aims to provide practical strategies for incorporating AI tools into teaching literature reviews. The activities outlined can be used as short classroom exercises to foster critical thinking (Lang 2021) or expanded into more comprehensive “flipped classroom” sessions for writing-intensive and research-focused courses.

AI Tools for Literature Review

Generative AI tools (like ChatGPT, Gemini, Co-Pilot, and Perplexity) have quickly become valuable resources for identifying relevant scholarly work. In response to this AI evolution, major academic databases like Web of Science, Scopus, EBSCO, ProQuest, JStor, and LexisNexis have also begun integrating AI assistants. Beyond these well-known tools, several specialized (often subscription-based) AI platforms cater specifically to academic research needs. Elicit, Scholarcy, OpenRead, Sematic Scholar, Consensus, Scite.ai, and LitMaps are a few examples of this growing field. These tools promise to save time, uncover connections between studies, and offer a more comprehensive understanding of research landscapes. But the critical question remains: How should we use these tools effectively in our classrooms?

Mishaps of Generative AI with Literature Review

Based on my experience and conversations with colleagues, generative AI tools like ChatGPT often introduce significant errors in literature reviews. These tools can hallucinate, fabricate information, and produce inaccurate citations – serious issues in academic research, where accuracy and reliability are crucial. One major limitation is that conventional AI tools lack the specialized knowledge and contextual understanding needed for thorough academic analysis. As a result, they may provide outdated information, misinterpret complex concepts, or fail to differentiate between peer-reviewed sources and unreliable publications.

To address these challenges in the classroom, I recommend starting with an exercise where students critically evaluate an AI-generated essay. Choosing a familiar topic – such as one previously discussed in class or related to the course – ensures that both students and instructors can identify AI inaccuracies more easily. The AI-generated output will likely miss key nuances or omit important readings, especially if the tool has limited access to academic databases.

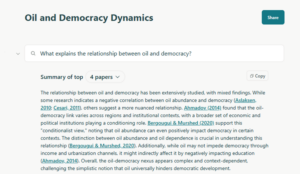

For instance, I used Elicit to explore the literature on the relationship between oil and democracy. I prompted the tool with “What explains the relationship between oil and democracy?” as shown in Figure 1. The summary it generated highlighted key themes and trends, offering a useful starting point. However, I quickly noticed the absence of foundational works like Ross (2001), a key source in this field. This gap illustrates why human oversight is crucial because even specialized AI tools may not cover all relevant literature comprehensively.

Figure 1. Elicit Reports on “What Explains the Relationship Between Oil and Democracy?” Prompt

Note: This prompt was initiated on 11 November 2024 at Elicit.org using a basic free plan.

Classroom exercises that expose students to these limitations can be highly educational. By analyzing AI-generated outputs in group discussions, students learn to identify errors and understand the importance of critical evaluation. This process not only highlights the pitfalls of over-relying on AI but also reinforces the role of human judgment in academic research. As Mollick and Mollick (2023) suggest, providing diverse examples and encouraging critical reflection helps students develop the skills needed to navigate AI tools effectively. By fostering this critical mindset, we empower students to use AI as a complementary resource rather than a substitute for rigorous research practices.

Rethinking Literature Reviews with AI

Instead of asking generative AI tools to write literature reviews outright, we can harness their capabilities to teach students essential aspects of the literature review process. Traditionally, this involves identifying key works, synthesizing findings, and situating research within existing scholarship. AI can complement these steps by offering valuable support and enhancing student learning.

For instance, generative AI can effectively summarize complex texts, such as journal articles with advanced methodologies. By providing concise overviews, AI tools help students quickly grasp core arguments and key concepts. A practical exercise could involve having an AI summarize an article, then guiding students to critique or refine that summary. This not only develops their critical reading skills but also teaches them to assess the accuracy and nuance of AI outputs.

Generative AI can also assist in identifying research gaps. When prompted thoughtfully, AI tools suggest areas needing further investigation, sparking critical reflection. This back-and-forth interaction with AI can form the basis of a classroom activity, where students critically evaluate AI-generated suggestions. Although AI cannot replicate the depth of human academic discussions (Raymond 2024), it can simulate meaningful interactions by offering students a personalized and reflective learning experience.

Another valuable use of AI is in generating initial bibliographies. While AI-generated bibliographies require verification for accuracy and relevance, they can provide a solid starting point for literature searches. I have found AI tools particularly effective for keyword identification. Students often struggle to pinpoint the right keywords, and AI tools can generate lists of synonyms and related terms. For example, when I asked ChatGPT to create a search string for factors explaining the relationship between oil and democracy using Boolean terms within Web of Science, it not only provided alternative keywords but also explained the rationale behind the search string (see Figure 2).

Figure 2. Results for the ChatGPT Prompt for Keyword Search

Note: This prompt was initiated on 26 November 2024 using ChatGPT-4.

Incorporating AI into the literature review process offers numerous teaching opportunities. These tools provide instant feedback and facilitate continuous interaction, which are highly valued in the learning process. By strategically integrating AI into our teaching, we can help students develop critical skills, enhance their understanding, and navigate the complexities of academic research more effectively.

Final Thoughts on Balancing AI and Critical Thinking

Generative AI presents both opportunities and challenges for teaching literature reviews. As educators, our role is to frame AI not as a replacement but as a powerful tool to enhance critical thinking and research skills. By integrating AI thoughtfully, we equip students to navigate academic research with a balanced approach–leveraging technology while honing essential skills like analysis, verification, and ethical judgment.

Throughout this reflection, I have emphasized that AI should supplement, not substitute, traditional research practices. Exercises that incorporate AI can reinforce crucial lessons about source verification, rigor, and credibility. Ultimately, the goal is to guide students in using AI responsibly and critically. By fostering discussions around ethical considerations and responsible AI use, we empower students to become discerning researchers who can harness technology without compromising academic integrity. AI might change the tools we use, but the core principles of critical thinking, rigor, and ethical scholarship remain timeless.

References

Lang, James M. 2021. Small Teaching: Everyday Lessons from the Science of Learning, 2nd Edition. Hoboken, NJ: Jossey-Bass.

Mollick, Ethan R., and Lilach Mollick. 2023. “Using AI to Implement Effective Teaching Strategies in Classrooms: Five Strategies, Including Prompts.” The Wharton School Research Paper, March 17. https://ssrn.com/abstract=4391243.

Raymond, Chad. 2024. “AI and the Case for Project-Based Teaching.” The Chronicle of Higher Education, August 27. https://www.chronicle.com/article/ai-and-the-case-for-project-based-teaching.

Ringmar, Erik. 2024. “Political Science Exams in the Age of AI.” Political Science Educator: A Newsletter of the Political Science Education Section of the American Political Science Association 28 (1): 72-75.

Ross, Michael L. 2001. “Does oil hinder democracy?” World Politics 53(3): 325-361.

Torres, JT, and Adam Nemeroff. 2024. “Are We Asking the Wrong Questions About ChatGPT?” The Chronicle of Higher Education, April 15. https://www.chronicle.com/article/are-we-asking-the-wrong-questions-about-chatgpt.

—

Ozlem Tuncel is a Lecturer and Data Services Specialist at the Research Data Services Department at Georgia State University. Her research agenda focuses on political parties, opposition cooperation and coordination, democratic backsliding, and authoritarian regimes.

Published since 2005, The Political Science Educator is the newsletter of the Political Science Education Section of the American Political Science Association. As part of APSA’s mission to support political science education across the discipline, APSA Educate has republished The Political Science Educator since 2021. Please visit APSA Educate’s Political Science Educator digital collection here.

Editors: Colin Brown (Northeastern University), Matt Evans (Northwest Arkansas Community College)

Submissions: editor.PSE.newsletter@gmail.com